AI Avatar creates a reusable presenter from a short reference clip or image/voice pair, then generates talking-head videos from a script. It is best for founder updates, explainers, UGC ads, training videos, and repeatable spokesperson content.Documentation Index

Fetch the complete documentation index at: https://docs.mosaic.so/llms.txt

Use this file to discover all available pages before exploring further.

How It Works

The AI Avatar flow has two parts:- Create an avatar: provide a 4-15 second source video, or provide an image plus a 4-15 second voice reference. Mosaic processes this into reusable avatar references.

- Generate a video: select the avatar profile, write the script, choose the model and take style, then run the agent.

Avatar Profiles

Avatars live in your workspace library and can be used from the editor or API. Mosaic prepares the reference video, reference image, and voice audio during processing. Recommended source video:- 4-15 seconds long

- Exactly one person on screen

- Clear face, direct-to-camera framing

- Natural speech with visible mouth movement from that person

- Clean single-speaker audio from that person; avoid background speakers, music, heavy noise, or dubbing

- Minimal cuts, overlays, or visual effects

Single Take vs Multitake

Single take asks the model for one continuous delivery. Use it for short scripts where a natural uninterrupted performance matters most. Multitake lets Mosaic split longer scripts into sentence-complete chunks, render multiple takes, normalize them, and stitch the final output. Use it for longer scripts, retries, or workflows where reliability matters more than one uninterrupted provider clip. Seedance 2 and Seedance 2 Fast support both modes in Mosaic. In multitake mode, Mosaic still preserves the avatar identity and voice while chunking the script behind the scenes.Model Options

Seedance 2 and Seedance 2 Fast are the recommended AI Avatar models. They are Mosaic’s most cost-effective and life-like avatar generation options, and they support both single take and multitake generation with avatars from your library.| Model | Best For | Notes |

|---|---|---|

seedance-2-fast | Recommended fast avatar generation | Most cost-effective option. Fast provider output is upscaled to 1080p when needed. |

seedance-2 | Recommended high-quality avatar generation | Most life-like option. Supports 16:9 and 9:16. |

avatar-v4 | Legacy Longcat 1 single-take avatars | Requires a voice reference or avatar profile. |

fabric-1 | Legacy lip-sync style single-take generation | Can use an uploaded voiceover. |

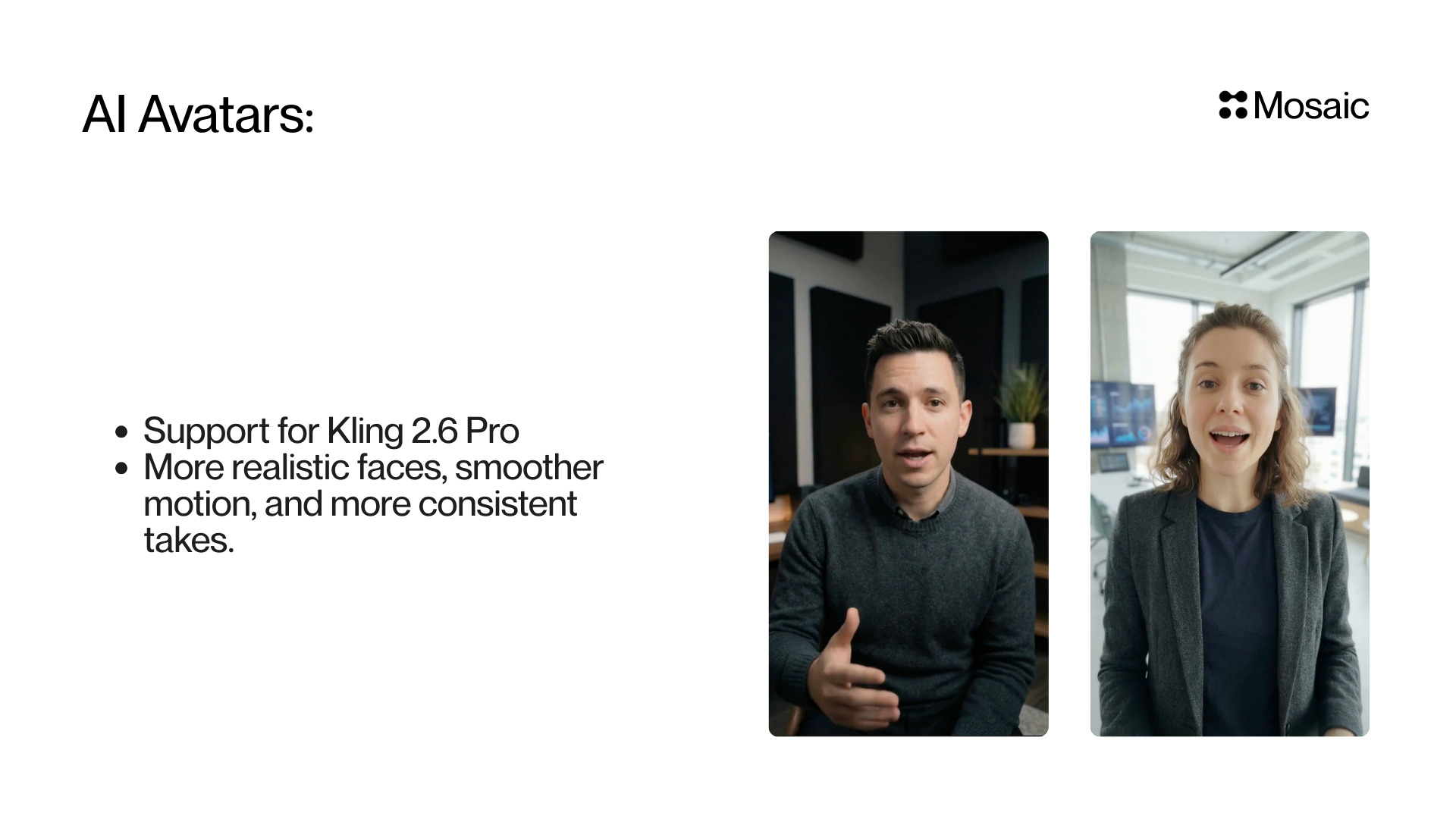

kling-2.6-pro | Legacy multitake avatar generation | Supports product and character references. |

kling-3-standard | Legacy multitake avatar generation | Shown as Kling 3 in the editor. |

kling-3-pro | Legacy multitake avatar generation | Higher-cost legacy Kling option. |

API Workflow

- Create an avatar with

POST /avatar-profiles/create. - Create an agent with

POST /agent/create, then add an AI Avatar node withPOST /agent/{agent_id}/update. - Run the agent with

POST /agent/{agent_id}/runand no video inputs.

processing_status is failed, create a new avatar before using it.

Create an avatar:

video_model: "seedance-2" for the higher-quality Seedance 2 path. Set single_take: true for one continuous delivery, or false for multitake chunking.

API Info

Node Params & API Details

Node Params & API Details

- Node ID:

b3b4c9e2-2a47-4fa9-8ce8-0c1fa1d7b6ef

Node params

| Param | Type | Required | Default | Notes |

|---|---|---|---|---|

brief | string | Yes | "" | High-level intent/context (validated, ~1-1000 chars). |

script | string | Yes | "" | Spoken script. |

video_model | "seedance-2-fast" | "seedance-2" | "kling-2.6-pro" | "kling-3-standard" | "kling-3-pro" | "fabric-1" | "avatar-v4" | No | "seedance-2-fast" | Generation model choice. |

single_take | boolean | No | true | true for one continuous delivery; false for multitake chunking/stitching. |

aspect_ratio | "9:16" | "16:9" | "auto" | No | "9:16" | Output framing mode. |

avatar_profile_id | string (UUID) | Conditional | unset | Reusable avatar. Required for Seedance 2 models. |

creation_mode | "manual" | "video_reference" | No | "manual" | Avatar generation flow selection. |

reference_video_id | string (UUID) | Conditional | unset | Used when creation_mode="video_reference". |

reference_change_request | string | No | "" | Optional instructions when using video-reference mode. |

product_image_id | string (UUID) | No | unset | Product reference image. |

character_image_id | string (UUID) | No | unset | Avatar/character image override. |

voice_reference_id | string (UUID) | No | unset | Voice reference asset ID for cloning. |

voice_reference_type | "audio" | "video" | Conditional | unset | Required when voice_reference_id is provided. |

voiceover_id | string (UUID) | Conditional | unset | Optional voiceover upload for Fabric 1 lip-sync path. |

voiceover_type | "audio" | "video" | Conditional | unset | Required when voiceover_id is provided. |

elevenlabs_model_id | string | No | unset | Explicit ElevenLabs model override. |

elevenlabs_voice_settings | {stability?:number,similarity_boost?:number,style?:number,use_speaker_boost?:boolean,speed?:number} | No | unset | Fine-grained TTS tuning object. |

voice_dictionary | Array<{word:string,audio_id:string,enabled:boolean}> | No | [] | Up to three pronunciation references for Seedance 2. |

Parameter groups

- Core generation:

brief,script,video_model,single_take,aspect_ratio - Avatar library:

avatar_profile_id - Creation flow:

creation_mode,reference_video_id,reference_change_request - Visual references:

product_image_id,character_image_id - Voice references:

voice_reference_id,voice_reference_type,voiceover_id,voiceover_type - Voice tuning:

elevenlabs_model_id,elevenlabs_voice_settings,voice_dictionary